LLM/SLM Development

By building dedicated LLMs, transform enterprise knowledge into a continually evolving competitive advantage

Generic LLMs cannot establish competitive advantage, and dedicated LLMs trained on the enterprise's own knowledge, know-how, and data have become essential. By making tacit knowledge explicit and leveraging it in a secure environment, we simultaneously lift operational quality and accumulate intellectual assets. From the perspective of securing data sovereignty as well, building a proprietary LLM is a strategic agenda item.

Challenges

Do you face these challenges?

- GPU Procurement and Operating Costs

Securing the massive GPU resources required to build a proprietary LLM is difficult, and cloud usage costs balloon beyond expectations. In particular, the cost-effectiveness of fine-tuning and continuous training updates remains opaque.

- Mitigating Hallucinations

Misinformation and inaccurate answers generated by LLMs prevent enterprises from moving to production use in customer-facing or internal operations. Securing trustworthiness in high-precision domains such as legal and medical is a particular challenge.

- Barriers to Converting Tacit Knowledge Into Data

The enterprise's specialized knowledge and operational know-how needed for an industry-specific LLM are individual-dependent and undocumented, and a process for structuring them into a form that can be used as training data has not been established.

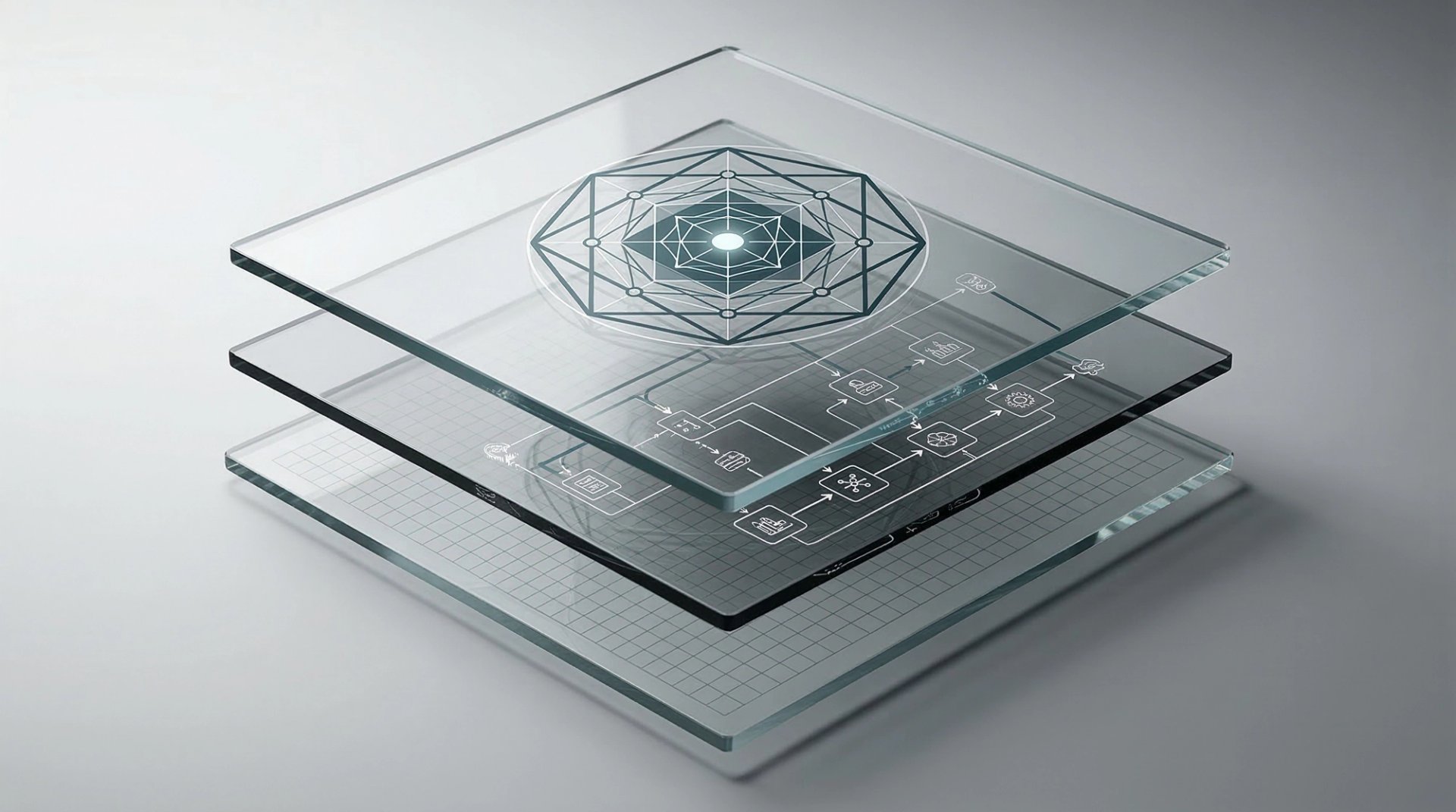

What Sets enableX Apart

Beyond surface-level support for generic LLM adoption, enableX holds the implementation capability to build enterprise-specific LLMs and SLMs from full scratch or through fine-tuning. The greatest difference lies in the established process for converting tacit knowledge into training data. We design end-to-end — from operational interviews and knowledge extraction through quality-assurance frameworks based on RAG and fact-checking mechanisms. Rather than simply building a model, we also provide the operational design needed to nurture it as a continuously evolving "knowledge asset," generating genuine competitive advantage.

What enableX Can Do

From the optimal choice between full-scratch development and fine-tuning, to GPU procurement strategy and cloud cost optimization, we design end-to-end optimization for LLM/SLM build-out. By structuring the sales materials, technical documents, and customer-interaction records dormant within the company and training a dedicated model on them in a secure environment, we deliver industry-specific, high-precision responses that generic LLMs cannot match. To mitigate hallucinations, we embed RAG architecture and feedback loops, ensuring the quality that lets the field continue using the model with confidence.

“An LLM is not something you finish building — it is something you raise. enableX holds a design philosophy that takes into account build-out, operations, and improvement.”

DeepTech Executive Director

Let's talk in detail

Our expert team will provide tailored proposals

Key Features

Why Choose Us

Safe Instruction-Data Creation Capability

What determines the accuracy of a proprietary model is the quality of the training data. enableX holds proprietary capabilities and know-how for properly handling personal information and confidential information and creating "high-quality instruction data" on which AI can be trained safely and ethically.

Know-How for Vetting Massive Dialogue Data

For "data vetting and cleansing" — the most gritty and crucial work in AI development — we hold insights grounded in experience handling massive volumes of dialogue data. This allows us to lift the "naturalness of responses" and "contextual comprehension" that significantly determine the practicality of the model.

Our Professionals

Let's talk in detail

Our expert team will provide tailored proposals

Ready to transform your business?

Discover the value LLM/SLM Development can deliver to your business.