To the Front Lines of AI Social Implementation — Interview with Komura, Head of the Technology Team

Having led the launch of AI teams at Accenture, Deloitte Tohmatsu, and Nomura Research Institute, Komura speaks as Head of enableX's technology team about the essential challenges of business development in the AI era and an ambitious vision looking ahead to 2030.

To the Front Lines of AI Social Implementation — Interview with Komura, Head of the Technology Team

2025.11.16

Starting his consulting career at Accenture, Komura then led the launch of AI teams at Deloitte Tohmatsu and Nomura Research Institute, served as a business owner at an AI company, and joined enableX. Having worked on the social implementation of AI for over a decade, Komura — Head of enableX's technology team — speaks about the essential challenges of business development in the AI era and an ambitious vision aimed at 2030.

From consulting and SIer to the world of AI. A decade of pursuing specialization and distinctiveness

— First, please tell us about your career to date.

I joined Accenture as a new graduate in 2012, working on enterprise-wide projects across supply chain, ERP, SRM, CRM, and Business Process Re-engineering (BPR). After that I experienced a few consulting firms, and what was distinctive was that I got to work in environments ranging from thousands of people down to just a few.

The larger the scale, the more internal politics — vertical and horizontal — became visible, and the less flat the organization became. From my career so far, smaller firms suited me better in terms of accountability and a sense of ownership. In small teams I could have broad autonomy, and clients also tended to engage as accountable participants, which let me commit to both quantitative and qualitative goals. While stepping into AI fields such as natural language, voice, image, and LLMs, I wanted to deliver results while bringing more of my own specialization and distinctiveness to bear — which is what led me to enableX.

— You made the full shift to AI around 2020. What was the trigger?

In fact, my first encounter with AI goes back to around 2016-2017. Even then, enterprise companies had a growing need to leverage internal and external data, and I had opportunities to be involved in natural-language projects. Those were the days when terms such as knowledge search engines, semantic vectors, and ontologies drew attention. However, as I continued to deliver value through consulting, I started to feel something was off. Consultants ultimately drive things from the consultant's perspective alone — at the RFP and technology-selection phases, we offer the options and leave the judgment to the client. This is, in a sense, a form of nudging — and also a form of tunnel vision. I started to question whether we were really providing value ourselves. From there I pursued, in parallel, activities to acquire AI engineering, research-domain knowledge in DeepTech, and an academic perspective so that I could practice the work myself.

While working, in my third or fourth year of my career, I moved to acquire a U.S. MBA, where I saw workers from different countries, with different positions and specialties, use their downtime to seriously tackle shared problems together. More than acquiring an academic perspective, I felt the potential of the action driven by the intellectual curiosity of global peers and gained essential insight from it.

From around 2017, to acquire AI engineering, I was already doing coding and reading papers as a hobby. I had grasped Transformer technology at the stage of Google's BERT in 2018, when it was published and applied, so by 2021 — when what could be called the natural-language revolution boomed — it felt technically inevitable to me. As a result, when ChatGPT appeared, I focused on the business reasons it spread through the world so quickly — including the UI that brought familiarity and the way the technology was delivered — and I had the foundation to view the trend with composure.

— What specific career choices did you make from there?

I wanted to engage more deeply with AI and also to launch new businesses. With that in mind, I joined an AI/SIer with think-tank functions, where I worked on engagement development and delivery for government, private, and public sectors, and on new business development in the neuroscience domain.

What I learned there, however, was the many constraints unique to large organizations. Doing what you wanted to do required all kinds of coordination, making it hard to move freely; even developing customers and new businesses required up-front routing checks. With internal constraints, and a need to take the lead myself in engaging people and businesses, I went through real trial and error. The desire to "move with more speed on the premise of being the business owner" grew stronger. For the first time in my career, I chose not the safe placement but the leap — taking on business development at an AI company, the launch of its consulting practice, and acting as the business owner.

Only there did I truly enter the social implementation of AI in earnest, working with various companies and organizations on natural-language processing, LLM development, and large-language-model and fine-tuning model development at the zero-to-one stage on the front lines.

Looking back, it began with the unease I felt at consulting firms over tunnel vision, then moved through my interest in advanced technologies centered on AI, and finally into an environment where I could put my own hands to work on social implementation. It was during this period that I was finally able to establish something I could call true specialization.

The essential challenge of AI adoption — the "chasm that emerges among the three sides" of business, engineering, and research

— In your view, what is the largest challenge in modern AI adoption?

I have looked at AI adoption from three perspectives — "business," "engineering," and "research & development." There are bottlenecks that emerge among these three sides, and I see this as an enormous challenge.

The business side tends to fixate on what is usable, easy to use, and produces results, biasing the criteria when it comes to technical understanding and judgment on application.

Engineers, on the other hand, tend to direct their attention to building what they want to build, spending time on things that are not important from a business perspective, while treating usability and user needs casually. The R&D side tends to be pulled by "this is how research ought to be," leading them to hold on to commitments that are far from social implementation.

Ideally, the business side should move closer to engineering and research, and engineers and researchers should move closer to the business side. But they end up talking inside their separate, segmented communities, with no real mutual understanding. In this state, regardless of whether the project is internal or external, the goals end up misaligned, and projects tend to stall.

— Is this challenge about knowledge, or about stance?

Fundamentally, it is a matter of stance — of how deeply you are willing to step into unfamiliar territory — and of how you give it meaning. If you can fill that in, you can produce a cycle that turns well, so it feels like a waste. The generative-AI era is more than ever a "learn by doing" world, so the era in which you could succeed by relying on third-party guidelines or document-based directions is over, in my view.

— How should humans engage with AI?

The premise is that humans are involved in the AI's judgment process — checking and correcting the results, or teaching the AI what "good" looks like. This is called HITL (Human-in-the-Loop). Many people will go as far as evaluating the output. But without understanding the underlying logic, the feedback ends up superficial — "good," "bad" — without an approach to how to concretely improve it.

Generative AI is fundamentally an inference model, selecting a top set of candidates and choosing the one that best applies to produce the next character or text. Once you deeply understand these mechanics, you build an intuitive sense of which feedback — data, parameter settings, and so on — produces which behavior.

Furthermore, the goal must not stop at "becoming able to use AI in operations." It is also a human responsibility to decide "what to teach" and "how to teach." It is the same as studying for an exam — you can't expect to score on what you haven't studied. You can't expect improvement when you don't know what is being learned. Many cases stop short of stepping into that level. Convenience and designs that make practice immediately accessible are of course important, but humans must also adopt a perspective of stepping toward the AI and putting it into practice. That, I believe, is what leads to value creation through human-AI collaboration.

— I see. On the other hand, engineers and researchers have their own challenges.

That's right. Conversely, I also see many cases where they are not aligned with the business or the client. Projects ending at the PoC stage (proof of concept) have become the norm. The reason, I sense, is that building becomes the goal, takes up all the energy, and the project ends without a commitment to evaluation and improvement — a superficial way of proceeding. There are many projects where engineers or researchers interpret the client's request from their own perspective, build a prototype, and then end after a bit of trial verification. The proper approach is to immerse yourself deeply in the client's needs, repeat trial verification, fail and try again, and rapidly turn the cycle to drive issues to resolution.

An organizational approach that understands the difference between AI and IT is the key

— There also seem to be organizational issues behind why AI adoption does not work well.

Exactly. In many companies, AI budgets and IT budgets are being invested through exactly the same frame. But the very mechanism at the core of the technology is different.

IT is a "mechanism that reliably does what it is told," and as long as there are no bugs, you can pursue 100% accuracy. AI, by contrast, runs on inference — you do not always get a 100%-correct answer. Yet when success experiences from IT are deeply rooted, there is a tendency to bring the same expectations to AI.

That is precisely why AI projects also need to be given different objectives. For example, objectives such as "uncovering internal AI talent through hands-on environments" or "building the organization on AI-native premises" are very effective.

In fact, among the clients we supported, there are cases where they used the assets we provided to assemble their own AI and offer it externally. Fundamentally, AI is well suited to in-house enablement. Because trial verification can be done quickly, by flexibly setting project objectives, you can achieve far larger outcomes.

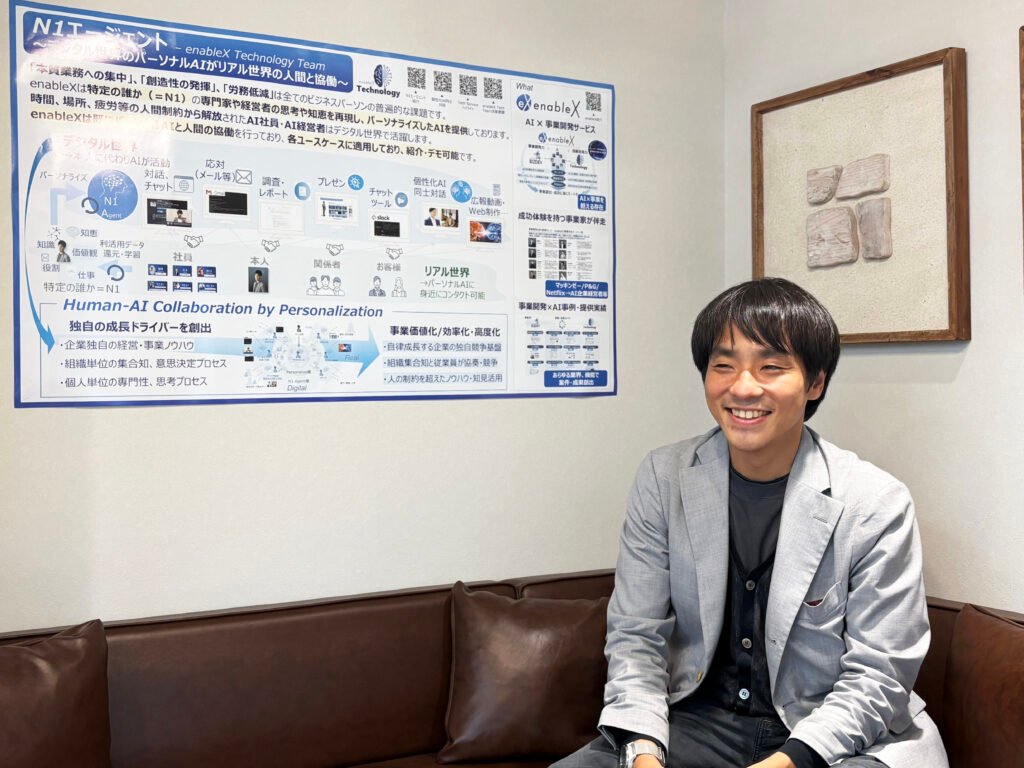

Three challenges to realize at enableX — AI/DX Service, DeepTech Hub, Neuron AI Labo

— What would you like to realize at enableX, Mr. Komura?

I want to develop three major activities. There is also a video where my AI introduces them, so please refer to the link below.

enableX Technology Activities Introduction Video

The first is the AI/DX Service.

Leveraging the experience and know-how I have built up, we will deliver services that step into personalization, digital-clone technology, sentiment analysis, and further into multimodal AI. At enableX, we have members who have driven monetization and service delivery hands-on, as business owners. In an environment with experts in marketing, sales, and data utilization, I am confident we can create synergies.

The second is building the DeepTech Hub.

The technologies and devices Japanese companies develop are often remarkable, but in many cases they lack the capability to deliver value optimally against client challenges and needs. We will therefore unite with multiple companies, build a structure to deliver optimally, and create a mechanism for offering technology and pivoting businesses. What is difficult for a single company becomes possible — for both engagement delivery and service provision — when two companies are combined. By expanding those options, we can address every kind of customer challenge. I see this as another activity that accelerates social implementation.

The third is Neuron AI Labo.

This is an initiative looking ahead to "Next AI." Many companies are working on generative AI, but looking beyond, we focus on "deepening our understanding of humans." By observing intuitive human reactions, neuromarketing and neurotech serve as the entry domains. With brain-wave measurement and applied neuroscience, we analyze where humans intuitively focus their attention and what draws their interest, and apply this to consumer purchasing behavior, advertising content, product placement, and more. The advanced application areas are wide-ranging, and combined with AI we will pursue the expansion of human functions and creativity themselves, and engage in research and development. We will pursue these activities at Neuron AI Labo.

The most fascinating 20 years, in which humans and AI fuse

— Looking ahead to the medium- and long-term future, what do you think the world will be like?

Taking Ray Kurzweil's 2045 Singularity as the reference point, in the 20 years ahead — set against the 200,000 years since Homo sapiens appeared — I am convinced this will be the most fascinating 20 years in human history. That is precisely why I want to stand on the front line and contribute.

For example, what people think when they see something. Meta has now announced smart-glass devices, and these glasses come with eye-tracking. Simply by living, everything a person sees can be captured. If the device measures pulse and pupil contraction, you can also tell when the person is excited, when their interest is engaged, and when they are focused. Using these inputs as origin points, you can intervene with visual content, or with personalized products informed by that information, propose offerings that nudge consumption and human behavior more intuitively. The era in which forming such touchpoints becomes the norm is right around the corner.

Of course, there are many hurdles to overcome — privacy concerns, security, and more — but against the backdrop of the day-by-day evolution of generative technology, a world in which marketing and advertising are optimized will surely arrive. As a basic stance, I want to affirm a future in which humans, AI, and advanced technology fuse to present new value, and contribute to building a future in which that potential is maximized.

Practice itself is the value — embodying a culture of "let's try it"

— Please tell us why you chose enableX, and the kind of people you want to work with.

What I place enormous weight on is whether you feel excitement and motivation in the face of "unknown knowledge."

I started my career in consulting — a fairly rigid industry — and spent a meaningful period of time in that environment. As years went by, my specialization solidified; in parallel, my favored industries, issues, and client tendencies also solidified. There is a positive aspect to this, but the expected value asked of me by my firm and my clients also became fixed, and as a result the work I was asked to do gradually consisted mostly of bringing out my existing experience and knowledge. Asking myself whether this experience — formed only against that environment — deserved to be called specialization, and feeling a sense of restlessness and dissonance, I changed careers even after turning 30.

So I want today's young people to choose an environment where they can feel growth even after 30. Conversely, when you feel something is missing in your current work, I want to convey that enableX offers an environment for taking on new things. In a fixed environment, you risk reaching a state in which you can only provide value in that environment — and that becomes a barrier to starting new things or taking on challenges.

Want to open new paths, dive into unexplored territory, take interest in things you have not done — I want to work with those kinds of people.

— Finally, please share any message.

One of enableX's behavioral guidelines is "Be the principal." Adding my own interpretation: don't just say "I want to build something like this" — actually build it. Even if what you can show first is a bit rough, that is fine. I think that is the kind of person we want.

Also, please value gathering your own unique inputs. Precisely because we are in the AI era, gathering mass-market information just like everyone else loses meaning. To make your own mind and thinking distinctive, you need distinctive inputs. New combinations of information produce creative value. Every time a new book on brain science comes out, I imprint it on my brain — and recently I've also been reading books like "How to Build a Villain" (laughs). By converting various ideas and source material into ideas of your own — not just talking about them, but turning them into practical outputs — outputs combined from distinctive inputs naturally take on distinctiveness. I think there are many people at enableX who find that interesting and take it seriously.

Of course, "breaking the mold rests on having mastered the mold" — the fundamentals matter. On top of that, with inference-based technologies like AI, practical curiosity matters — touching them, watching how they move, and elevating them through trial and error so that you can improve them.

Once you become that mode of person, since knowledge alone cannot win, in the AI space I personally believe that those who can break through this wall — those with that potential — are well-suited. Don't dwell too long in your head; without stopping your hands or feet, take the approach of "don't give up," "don't stop until you can do it," and "just try building it." I think enableX is well-suited to people who create the environment to explore on their own, or who actively seek out such environments. I'm a person too, of course — and I aim to relentlessly pursue and expand human potential, both my own and that of others.