Democratizing Data Analytics — The Frontier of AI Agent Adoption, Starting with Snowflake Cortex

enableX Executive Officer Mr. Kuramoto discusses the vision of resolving the structural challenges of individual-dependent, inefficient data analytics work with AI agents — and the path to realizing the democratization of data analytics through Snowflake Cortex.

Many enterprises struggle with the individual dependence and inefficiency of data analytics work. Requests concentrate on a limited number of engineers, and business decisions are delayed — enableX Executive Officer Kuramoto Gaku discusses the vision of resolving this fundamental challenge with AI agents, and the path to making it real.

Structural Challenges on the Data Analytics Front Line

——What kinds of issues does today's data analytics work face?

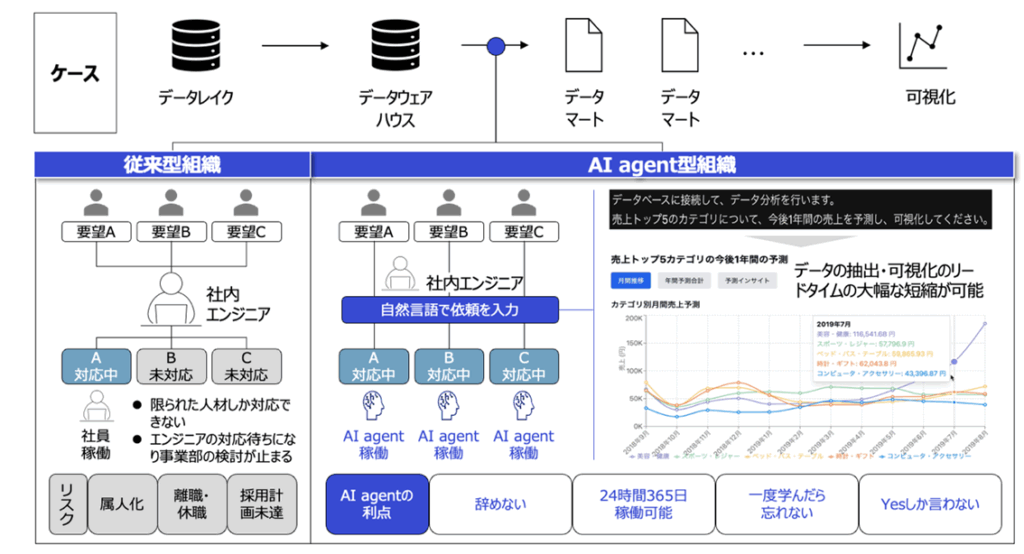

Kuramoto: At many enterprises, data analytics work depends on a limited group of talent. Requests pile up with in-house engineers, queues form, and meanwhile the business side's deliberations come to a halt. This is a daily occurrence.

Even more serious is talent risk. When specialists leave or take leave, the work simply stops; the same is true when hiring plans fall short. With data-driven management increasingly central, this structural problem is significantly eroding enterprise competitiveness.

——So AI agents become the solution to this.

Kuramoto: Yes. AI agents have strengths humans don't. They don't quit, they can operate 24/7, they don't forget what they've learned, and they "only say yes." That last one sounds like a joke, but it's actually an important point.

What we're aiming for is an environment where, simply by instructing an AI agent in natural language, you can extract and visualize data. For example, type "forecast for the top 5 categories by revenue over the next year," and you get the result instantly. Lead times can be reduced dramatically.

The Wall of Access Control and Governance

——It sounds ideal, but implementation seems to come with its own challenges.

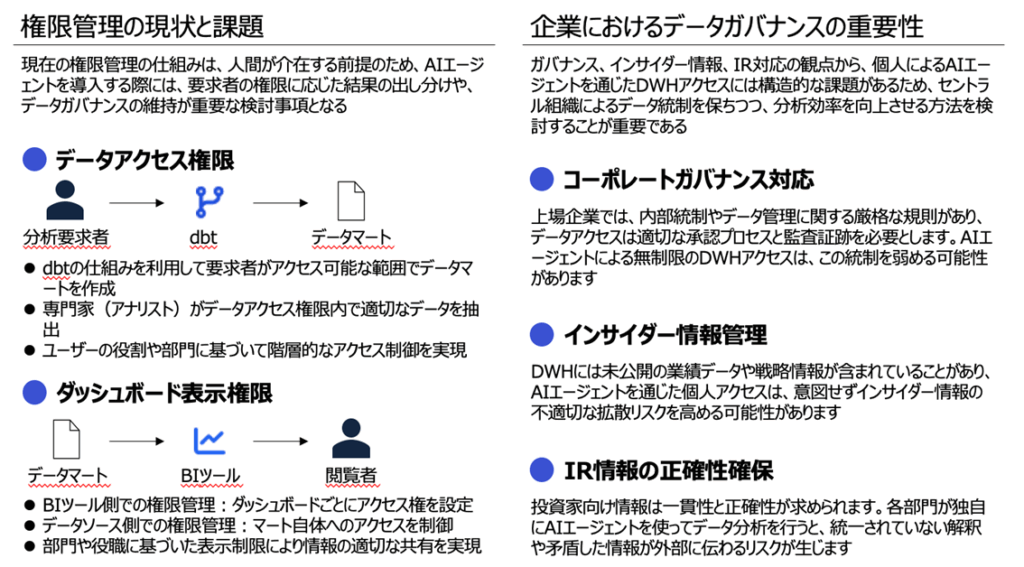

Kuramoto: Exactly. The biggest challenge is access control and data governance. Current access-control systems are designed on the premise that a human is in the loop. When introducing AI agents, differentiating results based on the requester's permissions and maintaining data governance become critical considerations.

For listed companies in particular — from the perspective of corporate governance, insider-information management, and ensuring the accuracy of IR information — allowing individuals to access the data warehouse through AI agents poses structural challenges.

——Could you describe the specific challenges?

Kuramoto: For example, if you use dbt (data build tool), you need to create data marts within the scope the requester is permitted to access. You also need access control on both the BI tool side and the data source side.

Furthermore, if an AI agent can access all data, you create a conflict with each user's individual permissions. Yet over-restrict it, and the AI's value is lost. How to resolve this dilemma is the key.

A Phased Approach: Why Start with GA4

——How will you work through these challenges?

Kuramoto: We propose a phased approach. We start with data from GA4 (Google Analytics 4).

We chose GA4 for three reasons. First, low domain specificity. As a standard tool used worldwide, the need for specialized expertise is comparatively low. Second, low methodological complexity. The work is largely aggregation-centric and doesn't require advanced statistics or machine learning models. Third, clarity of purpose. Use cases such as measuring the effectiveness of marketing initiatives are simple and well-defined.

——So you're introducing it from a central organization.

Kuramoto: Yes. Rather than rolling it out enterprise-wide from day one, we deploy it in stages, starting from the central analytics organization. This allows us to maintain governance while driving efficiency.

What's important is to be strict that analysis takes place within the data scope each user is permitted to access. That is the prerequisite for AI agent adoption.

An Often-Overlooked Challenge — Unifying Term Definitions

——Are there any other points to watch out for?

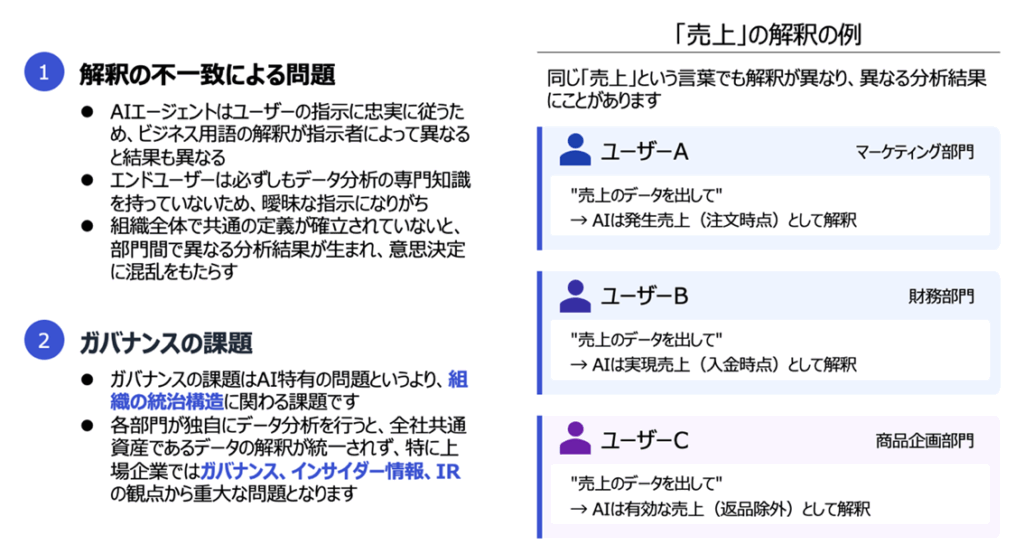

Kuramoto: There's actually a major challenge — how AI interprets terms differently. Take the word "revenue": the marketing team may interpret it as "booked revenue (at the time of order)," the finance team as "realized revenue (at the time of payment)," and the product planning team as "effective revenue (excluding returns)."

Because AI agents follow the user's instructions faithfully, these interpretive differences translate directly into different analytical results. Without a shared definition across the organization, different departments produce different results, and decision-making is thrown into confusion.

——It's a fundamental governance issue, isn't it.

Kuramoto: That's exactly right. It's less an AI-specific problem and more an issue tied to the organization's governance structure. That's precisely why, alongside technology adoption, putting data governance in place is indispensable.

A Concrete Roadmap for Implementation

——Could you walk us through the implementation process?

Kuramoto: Our proposal is broadly divided into two phases.

The first phase is "AI Adoption Planning" and "Demo Environment Setup using GA4." We first survey the current data environment and consider scenarios for AI agent integration. From there, we run a concrete PoC (proof of concept) using GA4 data.

——What kind of validation do you carry out in the PoC?

Kuramoto: For example, we validate how accurately and efficiently an AI agent can perform analyses such as "sales by category, by month, and year-over-year." Where BigQuery integration is possible, we configure the connection; where it isn't, we download data from GA4 and run cleansing.

What's important is to run, in parallel, prompt engineering so that the AI agent can reproduce past GA4 reports and typical analytical tasks, alongside reviewing the GA4 event design and related setup. Through iterative learning and tuning, we raise accuracy to a practical level.

Snowflake Cortex — A Pragmatic Option

——Could you tell us about the technical approach?

Kuramoto: In practice, many enterprises start with Snowflake Cortex before reaching for MCP (Model Context Protocol).

Snowflake Cortex is the AI capability provided by Snowflake, making it a more pragmatic option for enterprises whose data already sits on Snowflake. From a security and governance perspective, since everything is completed within the existing Snowflake environment, the barrier to adoption is lower.

——How does this differ from MCP?

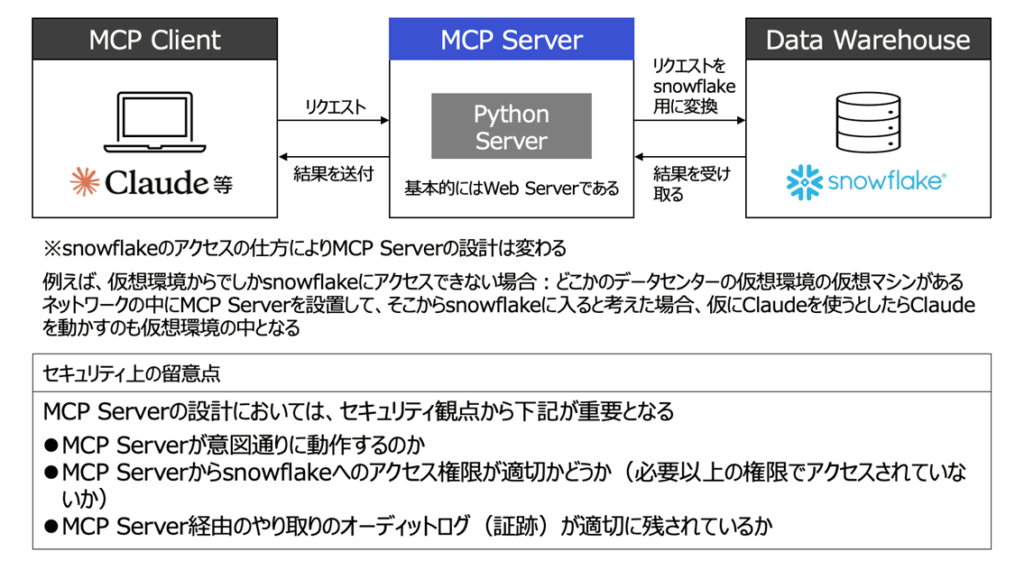

Kuramoto: MCP is a general-purpose protocol that lets you connect various AI agents to databases, but it requires building a new MCP Server. On top of that, because it's still a relatively new concept, many enterprises are cautious about building it from a security standpoint.

Snowflake Cortex, by contrast, is already integrated into the Snowflake environment, so no additional infrastructure build is required.

Especially for enterprises with a Snowflake-centered data foundation, the pragmatic approach is to first prove the value of AI utilization with Cortex, and then move to more advanced mechanisms such as MCP as needed.

Toward Innovation in the Data Analytics Workflow

——Could you tell us about the end state you're aiming for?

Kuramoto: What we're aiming for is innovation in the data analytics workflow, automation of the development process, and the de-individualization of business knowledge.

For example, when an in-house engineer poses a theme such as "identify the product categories whose sales rise on rainy days and the underlying factors," the AI agent identifies the necessary tables, sets the join keys, performs preprocessing, and generates the SQL automatically — and even handles visualization.

——How does the role of humans change?

Kuramoto: Humans become free to focus on more creative, higher-value work. Liberated from tasks like data collection and processing, they can spend their time on discovering insights and developing strategy.

What's important is that AI agents are not a replacement for humans — they are exceptional assistants. By organizing AI and humans together, the speed and accuracy of data cleansing and structural modeling improve dramatically.

——Finally, please share a message for our readers.

Kuramoto: Data-driven management is no longer an option — it is a requirement. Yet in reality, many enterprises continue to struggle with individual dependence and inefficiency in data analytics.

Starting from a pragmatic approach that leverages existing infrastructure such as Snowflake Cortex, and gradually evolving into more sophisticated mechanisms — through these practical methods, I believe we can realize the democratization of data analytics.